In 2001, a research-grade humanoid cost $1.5 million and could barely hold a pose. By 2024, the Unitree G1 shipped at $16,000 and could perform backflips. The cost curve for humanoid robotics is following a trajectory that looks less like aerospace and more like consumer electronics — and the underlying AI has only just arrived.

At xSpecies AI, we started from a simple observation: the biggest barrier to robots working alongside people is not hardware anymore. It is intelligence. And the kind of intelligence required for the physical world — the ability to see, reason, plan, and act across an infinite variety of tasks, objects, and environments — cannot be hand-coded. It has to be learned.

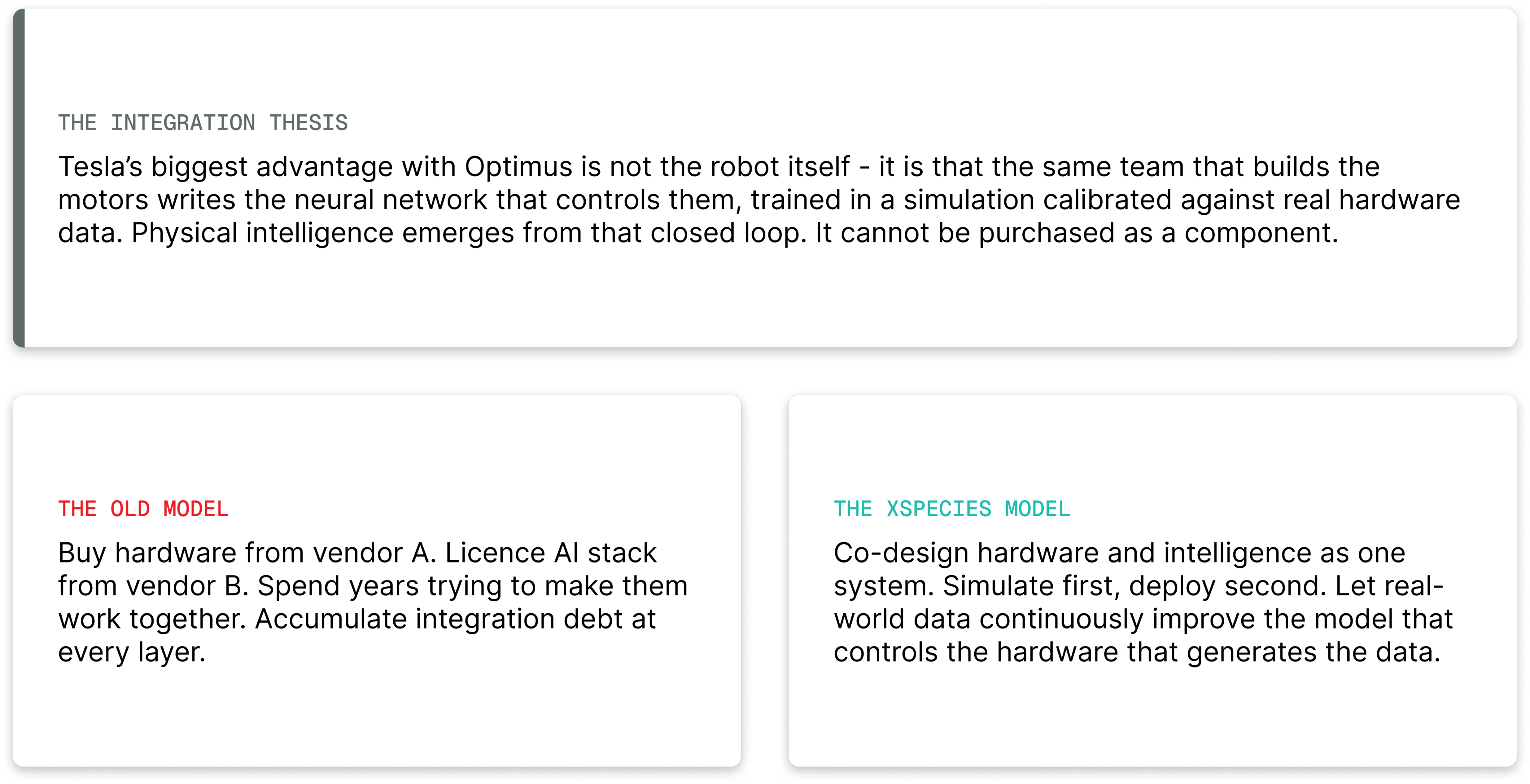

But intelligence alone is not enough either. The companies that will win this decade are those that vertically integrate hardware and software — teams that co-design the robot's body and mind together, closing the loop between physical sensation and learned behaviour at every layer. That integration advantage is not replicable by a software team that buys a robot off the shelf, nor by a hardware team bolting on an AI model as an afterthought.

That is the founding conviction behind xSpecies AI. We are building an AI-native humanoid platform — one where dexterous hardware, proprioceptive sensing, simulation infrastructure, and learned intelligence are designed as a single system, not assembled from parts.

Walk through any large warehouse, hospital supply chain, or eldercare facility and you will notice the same thing: an enormous amount of critical, repetitive, physically demanding work being done by people who are increasingly hard to hire and harder to retain.

India's manufacturing sector faces a 44% estimated labour shortfall. Healthcare and pharma face 42%. These are not problems you can patch with software — they require physical agents in the world. At the same time, the global conversation about robots replacing workers misses the more urgent story: robots filling roles that humans are no longer available or willing to take.

For India, this is not just a deployment opportunity. It is a chance to become the manufacturing, AI, and innovation hub for embodied intelligence — the way Bangalore became the world's software back office, but now for physical AI.

Japan's "Super Smart Society" initiative, China's National Robot Development Strategy, and the flood of capital into humanoid companies all point to the same conclusion: the race to deploy general-purpose humanoids is no longer speculative. It is happening. And India — with its deep engineering talent, large-scale industrial base, and acute labour gaps — has a unique window to lead, not follow.

Most robotics ventures make one of two bets. The first bet is hardware: build a capable robot body and assume the software will follow. The second bet is software: write intelligent control policies and assume they can run on any robot. Both bets have produced impressive demos. Neither has produced a deployable, general-purpose robot at scale.

The reason is that hardware and software for robotics are deeply coupled. The choice of actuator affects what reward signals are observable. The choice of sensing modality constrains which perception models are viable. The sim-to-real gap is not a software problem or a hardware problem — it is a system integration problem. Teams that own both sides of that boundary can close it. Teams that don't, can't.

This is why xSpecies AI is building custom dexterous hardware alongside our AI stack — not buying off-the-shelf hands and hoping the software adapts. It is why we are investing in our own tactile sensing rather than adopting whatever is commercially available. The tightest feedback loop between the physical and the learned is our core structural advantage.

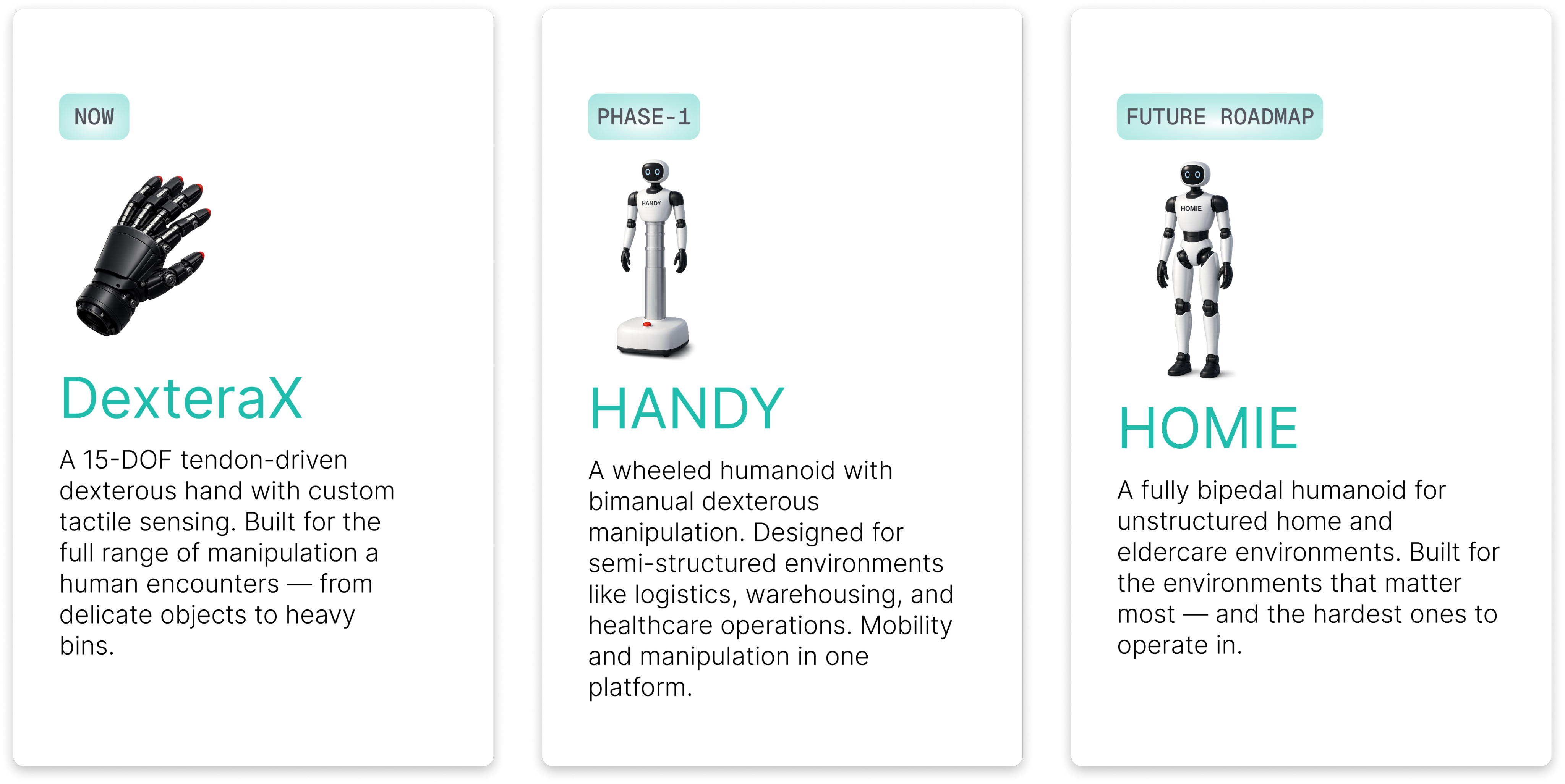

xSpecies AI is developing three complementary products, each representing a step in the progression toward a fully general humanoid platform — united by a single intelligence framework we call AIWare.

The sequencing is deliberate. DexteraX is our technical foundation — proving out dexterous manipulation and custom sensing. HANDY is our commercial entry point — a capable, affordable platform that generates real deployment data. Homie is the long-term vision: a robot that lives and works alongside humans in the home. Each product informs the next.

Most humanoid robotics teams start with locomotion — getting the robot to walk — and treat manipulation as a later-stage problem. We invert this. Manipulation is the harder problem and the more commercially valuable one. A robot that can walk but not use its hands is a spectacle. A robot with capable hands is a worker.

Our entire intelligence stack is designed around manipulation first, with locomotion as the enabling substrate that gets the hands where they need to be.

DexteraX is built around a tendon-driven architecture inspired by the human hand, where motors housed in the palm transmit forces to finger segments through tendons routed over pulleys. This gives us slimmer, more anthropomorphic finger profiles and lower distal inertia — critical for fast, responsive manipulation.

We are developing custom tactile sensing designed specifically for DexteraX's geometry and operational requirements. Off-the-shelf tactile sensors are either too bulky, too fragile, or too poorly calibrated for the contact forces and object diversity our hand encounters. Our approach embeds sensing at the hardware level, giving the robot a genuine sense of touch that feeds directly into our learned manipulation policies.

For manipulation intelligence, we use a dual-system architecture: System 1 — a fast, reactive policy running at high frequency for closed-loop contact control — and System 2 — a vision-language model for high-level reasoning, instruction understanding, and task planning. System 1 handles the how. System 2 handles the what and why. Together, they give HANDY the ability to understand a spoken instruction, plan a sequence of actions, and execute it with physical precision.

Across all tasks — manipulation, locomotion, and navigation — our approach is reinforcement learning first, augmented by human demonstration data. We use motion capture data and teleoperation recordings to seed imitation learning, then push further with RL to exceed human-demonstrated performance and discover novel strategies.

One of our core research investments is in automated reward design. Manual reward engineering is the biggest bottleneck in robot learning today. Getting the right reward function for a new task — one that doesn't produce reward hacking or brittle policies — typically requires significant human expertise and extensive iteration. We are building systems that discover reward functions automatically, reducing this bottleneck and enabling faster adaptation to new tasks and environments. This is an area of active research and IP development at xSpecies AI.

For locomotion, we use adversarial motion learning — a framework that simultaneously optimises for task completion and naturalness of movement. Rather than hand-coding gait patterns, we train a discriminator on reference human motion data. The policy learns to produce motion that is statistically indistinguishable from how a human moves, while still achieving the task goal.

The result is locomotion that is not just functional but naturally human-like: arm-swing, hip-roll, gait cadence — emerging from data, not from rules. This matters for deployment in human environments, where jerky or unnatural robot motion creates discomfort and erodes trust. We also use phase-variable parameterisations to encode different gait patterns — walking, running, or adaptive stepping — through the same reward framework, without requiring separate policies for each.

We train at scale in simulation — using Isaac Lab for high-fidelity physics and GPU-parallelised environments — and close the sim-to-real gap through systematic calibration of our simulator against real hardware behaviour. This is where vertical integration pays off most directly: owning the hardware means we can run controlled experiments to characterise joint friction, actuator dynamics, and contact mechanics precisely, and feed those measurements back into the simulator.

We also use domain randomisation — varying textures, friction, mass, and lighting during training — to produce policies that generalise robustly across the real-world variation our robots will encounter in warehouses, hospitals, and homes.

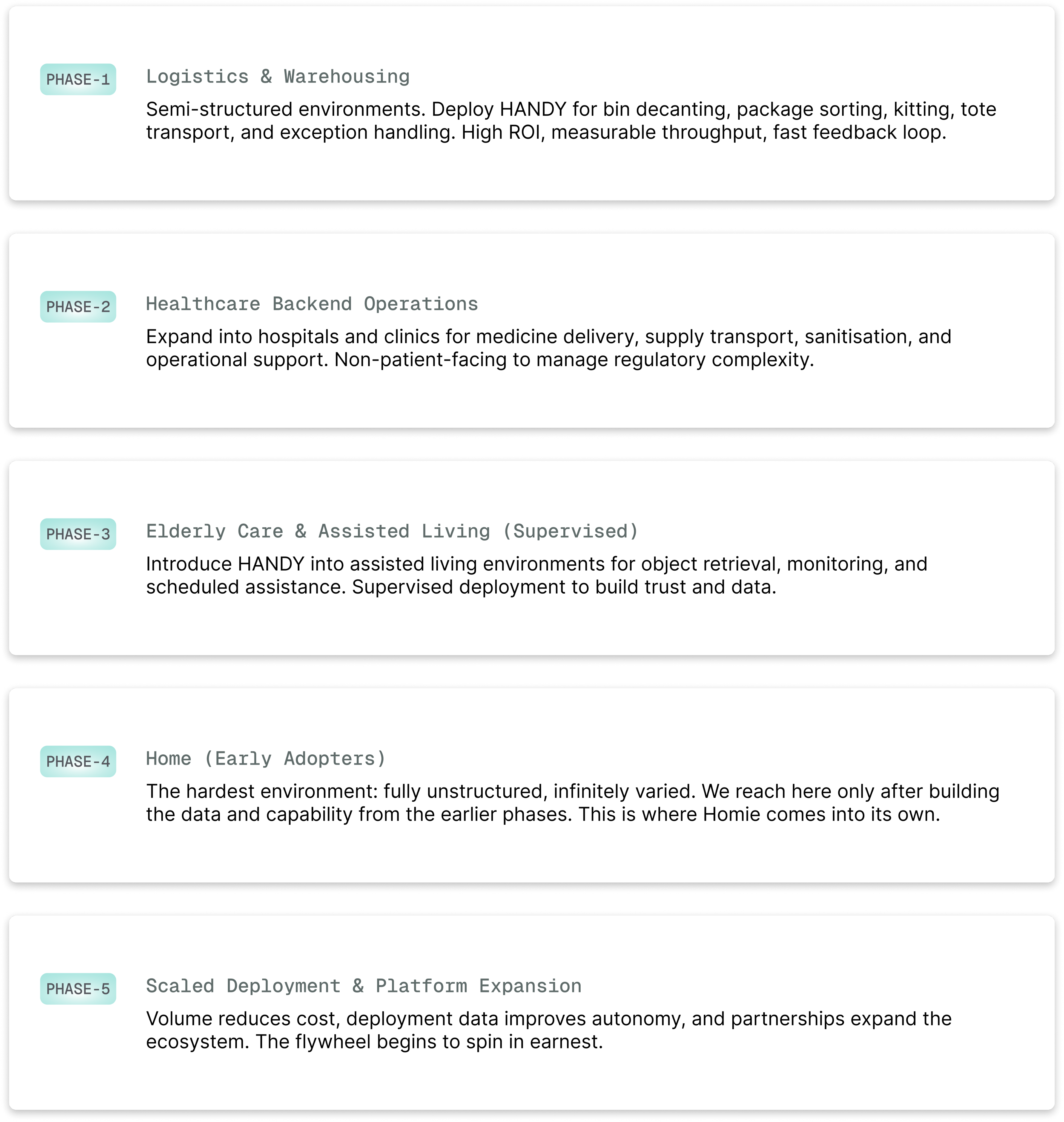

We are not trying to solve all of robotics at once. Our go-to-market strategy is deliberately sequenced from structured to unstructured environments, learning and compounding capability at each stage.

Our pricing model follows the same logic: hardware-first to deploy and learn, then layering software subscriptions — Skill Library access, OTA updates, eldercare capability packs — B2B partnerships, and data licensing as the platform matures. We target payback under 18 months for our first logistics customers, making the ROI conversation straightforward.

The components that make this possible have all matured simultaneously: foundation models that generalise from video and language, simulation platforms that let us run thousands of parallel RL environments, affordable hardware that closes the BoM gap, and large-scale datasets of human motion for imitation learning. No single one of these was sufficient. Together, they create a step change.

India's moment in this wave is real. The talent pool in robotics, reinforcement learning, and embedded systems is deep. The manufacturing ecosystem is growing. And the labour market pressures — in logistics, healthcare, and eldercare — are acute enough that customers are actively looking for solutions, not waiting to be convinced.

What xSpecies AI brings is a combination of RL-first engineering culture, automated reward discovery, co-designed hardware and intelligence, and a focus on the India-specific deployment context — the objects, environments, and workflows that our robots will actually encounter. Our localized demonstration data, our understanding of Indian operational environments, and our cost-optimised hardware roadmap are advantages that are difficult to replicate from abroad.

The question is no longer whether humanoid robots will work in the real world. The question is who vertically integrates hardware and intelligence well enough to make them genuinely useful — and who does it first for the billion-person markets that others haven't prioritised.

We are pre-MVP, with hardware procurement complete and a working system expected in the near term. Our immediate priorities are building out the teleoperation pipeline for human demonstration data collection, maturing our manipulation stack on top of large foundation models, and closing our first logistics design-partner deployment.

If you are a logistics operator, healthcare system, or eldercare facility interested in piloting, we would love to talk.

The long arc of AI is bending toward the physical world. We are building the robots that will inhabit it.

From homes to retail to logistics, we’re building robots that integrate naturally into the spaces people rely on every day.

Join Waitlist